In mathematics, solving a system of linear equations is one of the most fundamental skills for understanding relationships between variables. A system of linear equations is simply a set of two or more linear equations involving the same variables, where each equation represents a straight line (in two variables) or a flat plane (in three variables). These systems appear in fields such as physics, economics, engineering, computer science, and many more. Understanding how to solve them means understanding how different constraints interact to produce a single answer, multiple answers, or sometimes no answer at all.

Definition of a system of linear equations

A system of linear equations is a collection of equations where each equation is linear, meaning that each term is either a constant or the product of a constant and a single variable. In general form, a linear equation in two variables can be written as

aâx + bây = câ

For a system, we might have two such equations

- aâx + bây = câ

- aâx + bây = câ

In this case, the solution to the system is the set of values forxandythat satisfy both equations at the same time.

Types of solutions

When working with systems of linear equations, the solution set can fall into one of three categories

- One unique solutionThe lines intersect at exactly one point.

- Infinitely many solutionsThe lines lie exactly on top of each other, meaning every point on one line is also on the other.

- No solutionThe lines are parallel but have different intercepts, meaning they never meet.

In three variables, similar categories apply, but instead of lines intersecting, we consider the intersection of planes.

Methods of solving a system of linear equations

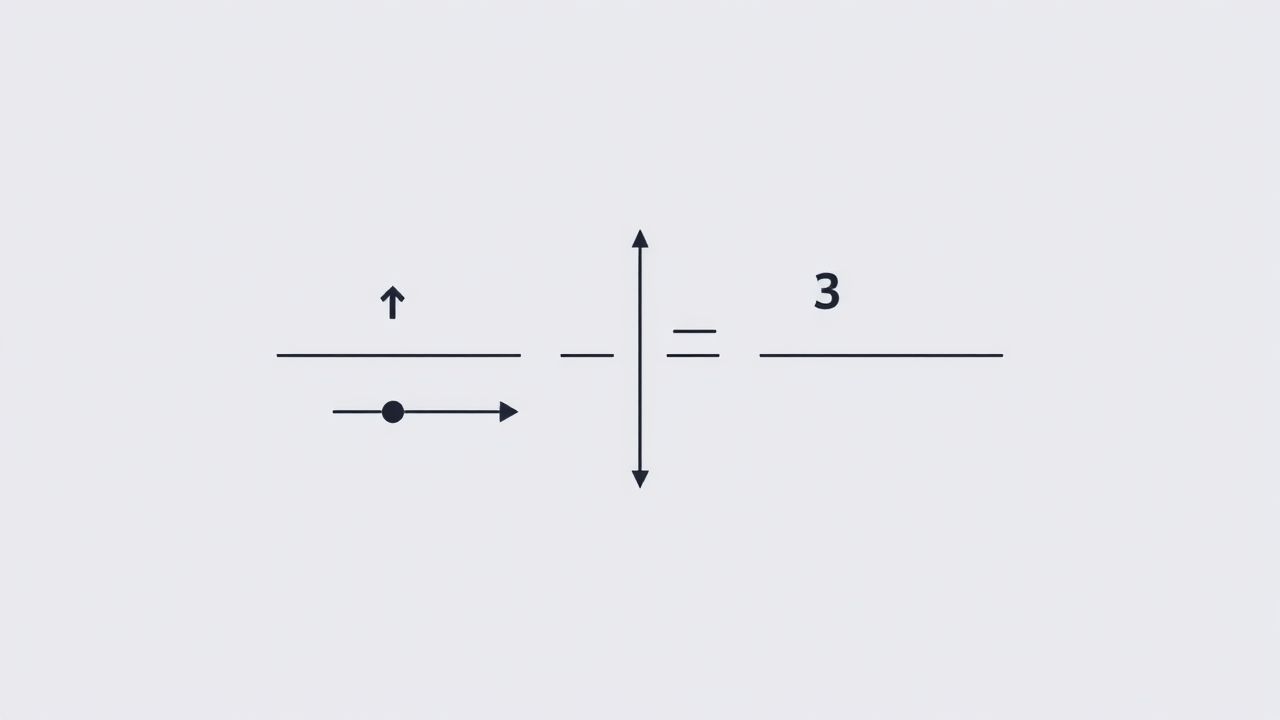

Graphical method

The graphical method involves plotting each equation on the same coordinate plane and finding the intersection point(s). While this method gives a visual understanding, it is not always practical for precise solutions, especially when dealing with fractional or large numbers.

Substitution method

In the substitution method, you solve one equation for one variable in terms of the others, then substitute this expression into the other equation(s). This method works well when one equation is already easy to isolate for a variable.

Elimination method

The elimination method involves adding or subtracting equations to eliminate one variable, reducing the system to fewer variables until you can solve for all of them. This is especially efficient when coefficients are easily manipulated to cancel variables.

Matrix method (Gaussian elimination)

This method uses matrix operations to systematically reduce the system into a simpler equivalent form, such as row-echelon form, where the solution can be found directly. Gaussian elimination is widely used for larger systems and is the foundation for computer-based solvers.

Example of solving a 2Ã 2 system

Consider the system

- 2x + y = 8

- x – y = 1

Using substitution

- From the second equation, x = 1 + y.

- Substitute into the first 2(1 + y) + y = 8.

- 2 + 2y + y = 8 â 3y = 6 â y = 2.

- Then x = 1 + 2 = 3.

The solution is x = 3, y = 2.

Systems with three variables

A system with three variables (x, y, z) can be written as

- aâx + bây + câz = dâ

- aâx + bây + câz = dâ

- aâx + bây + câz = dâ

Solving these requires more steps, often using elimination or matrix methods. In three dimensions, each equation represents a plane, and the solution corresponds to the intersection of these planes, which could be a single point, a line, or no intersection at all.

Applications in real life

Systems of linear equations are used in many practical situations, such as

- EconomicsDetermining the equilibrium point between supply and demand equations.

- EngineeringCalculating forces in static structures.

- Computer graphicsTransformations and rendering processes often involve solving systems of equations.

- ScienceBalancing chemical equations and modeling interactions between variables.

Special cases in systems

Dependent systems

A dependent system has infinitely many solutions. This happens when all equations describe the same geometric object (such as identical lines or coinciding planes). Mathematically, one equation can be derived from the others.

Inconsistent systems

An inconsistent system has no solution because the equations represent constraints that cannot be satisfied together, such as parallel lines with different intercepts.

Consistent independent systems

These systems have exactly one solution and occur when the equations represent geometric objects that intersect in exactly one point.

Matrix representation

A system of linear equations can be expressed in matrix form as

AX = B

Where

- Ais the coefficient matrix

- Xis the column matrix of variables

- Bis the column matrix of constants

Using this notation, solving the system becomes a matter of applying matrix operations or finding the inverse of matrixA(if it exists) to computeX = Aâ»Â¹B.

Gaussian elimination steps

The Gaussian elimination method follows these steps

- Write the augmented matrix for the system.

- Use row operations to create zeros below the leading 1s in each column (row-echelon form).

- Back-substitute to find the variables’ values.

This systematic process ensures that the solution, if it exists, can be found efficiently.

Solving with determinants (Cramer’s Rule)

Cramer’s Rule is a formula-based method that applies when the system has the same number of equations as variables, and the determinant of the coefficient matrix is nonzero. It uses ratios of determinants to find each variable directly, though it’s more suited for small systems due to calculation complexity.

Checking solutions

Once you have a proposed solution, it is always good practice to substitute the values back into the original equations to confirm they satisfy all conditions. This step verifies that no mistakes occurred during algebraic manipulations.

Importance in advanced mathematics

The concept of systems of linear equations extends beyond algebra into linear algebra, where ideas like vector spaces, rank, nullity, and eigenvalues arise from the study of linear systems. Mastering this topic is essential for more advanced studies in applied mathematics and computational fields.

Systems of linear equations provide a powerful framework for modeling and solving real-world problems involving multiple variables and constraints. From simple substitution and elimination in two variables to complex matrix operations in large systems, the principles remain the same find the set of variable values that satisfy all equations simultaneously. Understanding this concept opens the door to deeper areas of mathematics, science, and engineering, making it an indispensable part of problem-solving and analytical thinking.